I collaborated with my old friends Torchbox on getting the new Chatham House website live on Drupal 7; I'm especially relieved that someone there did a great case study on drupal.org, so that I don't have to fill in all the details. Go and read that for broader details of the project build, and then come back. Please!

Using a whitelabel build and deployment tools

Chatham House were already on Drupal 6, although the build had grown quite tangled - understandably, over several years - and it was time to start afresh. Also, during my time at Torchbox, I built both:

- a "whitelabel" Drupal website build: essentially an extension of a standard install profile; using a selectable "restaurant menu" of features to install most basic configuration; then extending with custom code to perform cross-feature tying-together e.g. a document management feature, deployed across (and overriding)

- a deployment tool for managing Drupal codebases: a Python tool - "Metope" - which was the precursor to Drush instance, powering both the initial whitelabel build and configuration, and also managing (re-)deployment of the codebase going forwards (using Drush make, naturally!)

These tools make it attractive to start any big "re-"build from scratch - basically a "build" without the "re-" - and so it felt like a great opportunity to move at least the content over from D6 to a completely fresh D7 build, and move forwards with a new, performant, optimized platform for Chatham House's future needs.

My involvement: D6 to D7 migration

My work revolved entirely around the Migrate and Migrate D2D (Drupal-to-Drupal) modules, extending these to suit both the D6 content, the D7 target structures, and also the tangles of new mappings and new information coming in from other sources during the transfer (typically from CSV.)

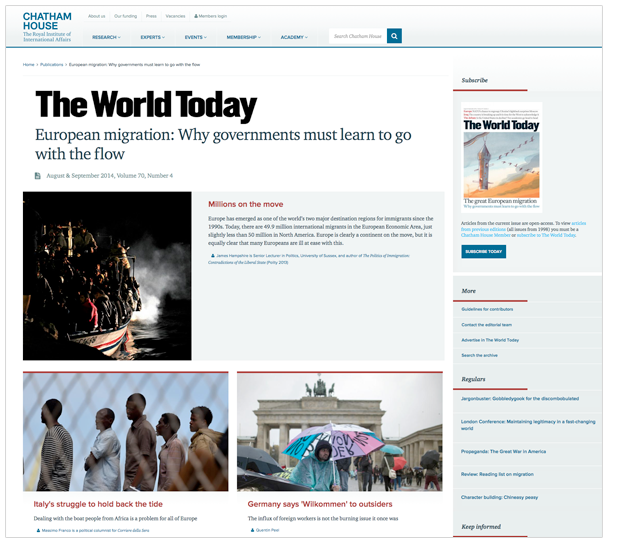

You'd think that, as I was working on this project, I might at least have the prospect of nice visuals as I was working, like for example:

Sadly, my job was to implement the very guts of the content migration process, which means that on a good day I would usually spend a long time staring at screens that looked something like:

Other days - most days, in fact - all I would see all day would be the command prompt, multiple copies of gVim, and - if I was lucky - the cat.

Experiences of Migrate D2D

Generally, Migrate and Migrate D2D were good to work with. They handled rolling migrations fairly well. The metrics were occasionally useful, although we didn't bother with the field-by-field metrics, as all migrations were checked by eye anyway.

One thing I would recommend is running the migrations from the command prompt using the excellent Drush commands bundled with Migrate: this was less prone to browser-based interruptions, and occasional glitches in the metrics (sometimes metrics came out with negative values in the "unimported" column if migrations were run solely from the browser!)

Some of the trickier problems included:

- Working out what messages to worry about: messages were very noisy, and could be fired by all sorts of unimportant events; especially by the apachesolr module on dev where Solr wasn'tt present.

- Migrating from D6 files-plus-sometimes-node-wrappers to D7 media entities and/or files.

- Migrating dates - until you turn Date Migrate on, which nothing warns you about, date migration is patchy. Even then, from/to dates didn't migrate well without either repeat date management in D7 (which we didn't want) or custom code.

- Rolling back migrations: if you weren't careful, related content or media entities would sometimes roll back too, and never be re-migrated.

- Duplicate or broken nodes/references/files in D6: handling those exceptional conditions.

- Injecting new information into the model during migration, from remote CSV or from guesses made about the D6 content ("with this taxonomy, and this title prefix, it's probably...")

- Sixty gigabytes of files in D6. A lot of this could be removed, as it was audio (being migrated to a remote service.) But managing and migrating the 5+GB remaining, across dev, staging and production, over network connections of varying bandwidth, was still tricky.

- Migrating audio wrapper nodes with faked unique Soundcloud URLs: because Media requires resources to be unique. Also building a quick interface to edit those URLs after adding, because Media doesn't have one.

- Migrating one D6 node as many D7 nodes, in order to phase out D6 functionality like flexinode and fit better into the existing D7 whitelabel build.

- Sharing code between unrelated migrate classes: nodes and files had to be migrated using fundamentally different classes, and these had to work in a range of different environments, some of which were using PHP 5.3. That meant traits and interfaces could not be relied upon, and instead shared helper objects were injected as dependencies.

I could go on for ages about these problems and how I solved them, but maybe the best thing is for interested parties to just ask me in the comments.

Summary

Using Migrate, rather than upgrading a Drupal site in situ (see here for how to use drush sup to do that very thing) was a good way of taking advantage of improvements in D7, rather than having a D7 site that was wedded to understandably dated ways of working.

Media, Features and Display Suite were all improvements that could rapidly be taken advantage of, before you even consider Torchbox's custom install profile and tools. Besides, a lot of the old D6 cruft only made sense in the context of old D6 custom code, which the project determined to clear out in favour of doing things the right way from the outset.

Migrate and Migrate D2D provide a good framework for building D6-to-D7 migrations, and were often helpful in reporting what had gone wrong, if only to move your development back onto the right track. But documentation is quite fragmented, and so experience pays dividends. Expect to build a lot of object-oriented PHP code if you go down this route yourself.

Still, the end result is fantastic, thanks to the frontend and design teams that worked on it. And migrating just the content, and making it D7-shaped along the way - rather than being tied to the old D6 structure - meant that the frontenders were given wings to fly, which (I think it's fair to say) they jolly well did.

Comments

Dan Kegel (not verified)

Sat, 18/07/2015 - 20:21

Permalink

Did you have to handle

Did you have to handle taxonomies? Were you migrating from a remote machine? I got stuck because migrate_d2d doesn't seem to allow migrating taxonomies from a remote machine ( https://www.drupal.org/node/2531872 ). I could just export and import the taxonomies via csv, but then migrate_d2d wouldn't know how to map tid's and vid's when migrating nodes.

jp.stacey

Tue, 21/07/2015 - 08:30

Permalink

It's not at all clear what

It's not at all clear what you mean by "migrating taxonomies": do you mean migrating each vocabulary "container" for the terms, or the terms themselves? Your link is also broken.

If the former, then no, because there was only half a dozen vocabularies and they were quick to set up in D7 (also, they needed to be rearranged). If the latter, then yes. To migrate terms, you can either:

All of this, by the way, has nothing to do with where the database is stored (remote machine or otherwise): that's handled at a completely different level. If you've managed to get access to the data at that lower level, then Migrate doesn't really care where the data is coming from.